Good morning! We are happy you have joined us today for an exploration through the latest AI updates. 🗺️

Artificial Intelligence online short course from MIT

Study artificial intelligence and gain the knowledge to support its integration into your organization. If you're looking to gain a competitive edge in today's business world, then this artificial intelligence online course may be the perfect option for you.

On completion of the MIT Artificial Intelligence: Implications for Business Strategy online short course, you’ll gain:

Key AI management and leadership insights to support informed, strategic decision making.

A practical grounding in AI and its business applications, helping you to transform your organization into a future-forward business.

A road map for the strategic implementation of AI technologies in a business context.

🤯 MYSTERY AI LINK 🤯

(The mystery link can lead to ANYTHING AI related. Tools, memes, articles, videos, and more…)

Today’s Menu

Appetizer: AI poison to protect human copyright 🧪

Entrée: Human workers are still cheaper than AI 💪

Dessert: World Health Organization releases AI guidance 🩺

🔨 AI TOOLS OF THE DAY

💬 ChatGPT Prompt Generator: Generate optimal prompts for ChatGPT based on your desired outcome. → check it out

🦾 Ollama: A tool designed to help you run large language models locally. → check it out

🧐 TechTreks: Deepen your understanding of various technologies. → check it out

AI POISON TO PROTECT HUMAN COPYRIGHT 🧪

Everyone at the family reunion got food poisoning … Runs in the family. 😅

What’s new? The University of Chicago has unveiled Nightshade, an offensive data poisoning tool designed to combat the unauthorized use of data in machine learning models.

How does it work? Nightshade is an offensive tool designed to distort feature representations within generative AI image models. The tool operates by transforming images into “poison” samples, introducing deviations from expected norms during AI model training. While human eyes perceive subtle shading, the AI model interprets a dramatically different composition, fostering confusion about image elements. This means that even a seemingly innocuous prompt for a cow in space might yield a model’s interpretation featuring a handbag instead. In doing so, the tool aims to dissuade model trainers from sidestepping copyrights, opt-out lists, and do-not-scrape directives by attaching a small incremental cost to unauthorized data usage.

What’s the significance? This release comes amidst the massive lawsuit from the New York Times against OpenAI for copyright infringement. How to handle copyrighted material amidst AI model training has become a hot topic. Opt-out lists, designed to prevent the incorporation of specific content, have proven ineffective, lacking enforceability and verification. So maybe poison will work? Time will tell.

HUMAN WORKERS ARE STILL CHEAPER THAN AI 💪

Sometimes, it pays to be cheap. 🤑

What’s up? The fear of AI rendering human jobs obsolete may be a bit premature, according to a study by the Massachusetts Institute of Technology (MIT).

What was found? The research focused on the cost-effectiveness of automating tasks in various industries. Surprisingly, the study found that only 23% of workers could be economically replaced by AI. Sectors like retail, transportation, and healthcare were identified as more conducive to AI adoption due to favorable cost-benefit ratios. However, the study also indicated that only 3% of visually-assisted tasks (like teaching) could be automated cost-effectively today. This is purely economic as well, with no attention given to factors like human creativity and relational skills.

Why? The high upfront costs associated with implementing AI systems often outweigh the benefits. So over time, AI might prove more cost effective for many positions, but not many companies outside of tech giants have the capital to implement the technology. As AI evolves and people discover cheaper ways of implementation, this might change. But as of now, this study suggests that the widespread displacement of jobs may not be as imminent as some fear.

WORLD HEALTH ORGANIZATION RELEASES AI GUIDANCE 🩺

Doctor: What’s the condition of the boy who swallowed the quarter?

Nurse: No change yet. 🤣

What’s new? The World Health Organization (WHO) has released a document articulating over 40 recommendations aimed at governments, technology companies, and healthcare providers to ensure responsible use of Large Multi-Modal Models (LMMs), capable of processing various data inputs and generating diverse outputs.

What are the risks? WHO acknowledges the risks associated with LMMs, such as the potential for producing inaccurate information, reliance on poor-quality or biased data, and broader issues like accessibility and affordability. The document underscores the importance of addressing automation bias, potential cybersecurity risks, and the engagement of diverse stakeholders in the development and deployment of LMMs.

What does WHO recommend? Key recommendations outlined by WHO include governments investing in public infrastructure, enacting laws and policies to ensure ethical obligations, establishing regulatory agencies for LMM approval, and implementing post-release auditing. Developers are also urged to engage diverse stakeholders in the design process, ensure LMMs perform well-defined tasks with accuracy, and predict potential secondary outcomes.

“Generative AI technologies have the potential to improve health care but only if those who develop, regulate, and use these technologies identify and fully account for the associated risks.”

TWITTER (X) TUESDAY 🐦

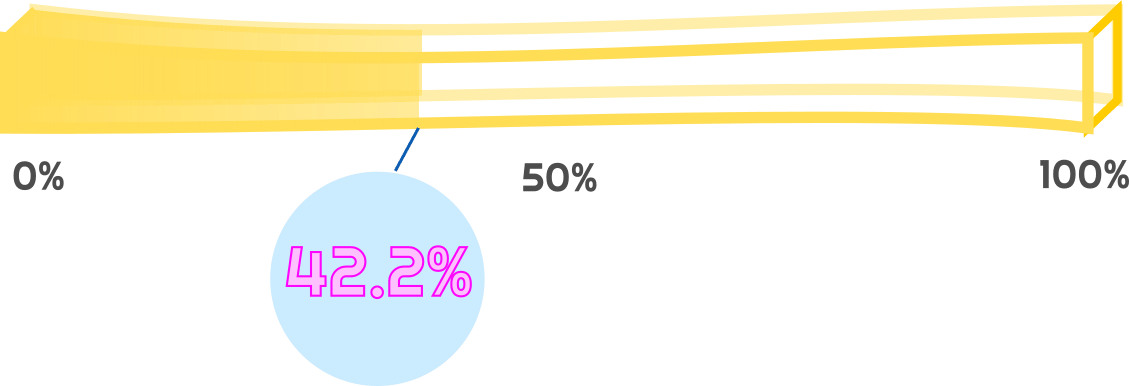

HAS AI REACHED SINGULARITY? CHECK OUT THE FRY METER BELOW

The Singularity Meter Rises 3.0%: NVIDIA Stock hits new all-time highs and continues to rocket